C: Assignments Day 4¶

Problem 1: Parallelize pi.c using MPI¶

Today we are going to parallelize the pi.c code you developed for day 1. to run at TACC you will need to use either idev or sbatch.

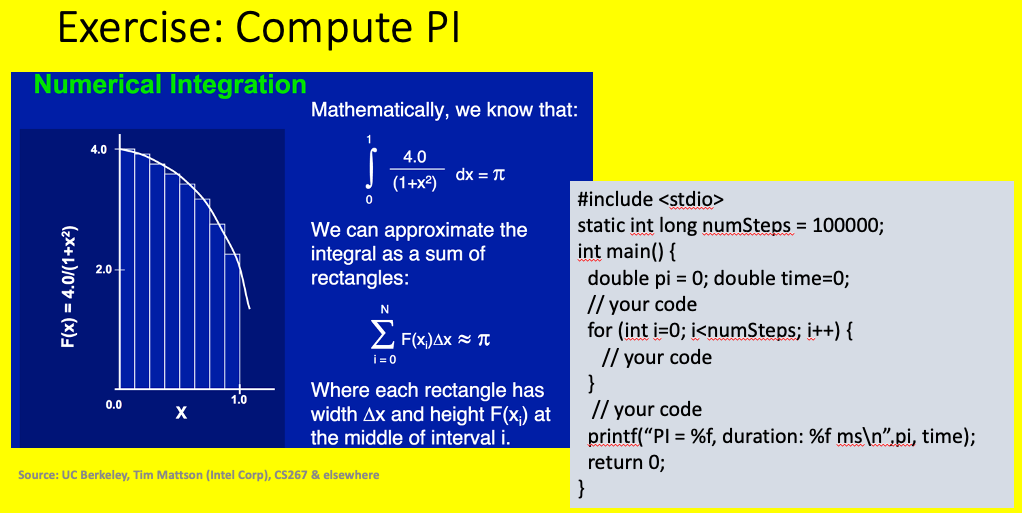

The figure below shows an method to compute pi by numerical integration. We would like you to implement that computation in a C program.

Computation of pi numerically¶

1#include <stdio.h>

2#include <time.h>

3#include <math.h>

4

5static long int numSteps = 1000000000;

6

7int main() {

8

9 // perform calculation

10 double pi = 0;

11 double dx = 1./numSteps;

12 double x = dx*0.50;

13

14 for (int i=0; i<numSteps; i++) {

15 pi += 4./(1.+x*x);

16 x += dx;

17 }

18

19 pi *= dx;

20

21 printf("PI = %16.14f Difference from math.h definition %16.14f \n",pi, pi-M_PI);

22 return 0;

23}

Note

When compiling at TACC if you wish to use gcc as I have done, issue the following command when you login.

module load gcc

When building and testing that the application works, use idev, as I have been showing in the videos.

When launchig the job to test the performance you will need to use sbatch and place your job in the queue. To do this you need to create a script that will be launched when the job runs. I have placed two scripts in each of the file folders. The script informs the system how many nodes and cores per node, what the expected run time is, and how to run the jib. Once the executable exists, the job is launched using the following command issued from a login node:

sbatch submit.sh

Full documentation on submitting scripts for OpenMP and MPI can be found online at TACC

Warning

Our solution of pi.c as written as a loop dependency which may need to revise for tomorrows OpenMPI problem.

You are to modify the pi.c application and run it to use mpi. I have included a few files in code/parallel/ExercisesDay4/ex1 to help you. They include pi.c above, gather1.c and a submit.sh script. gather1.c was presented in the video, and us shown below:

1#include <mpi.h>

2#include <stdio.h>

3#include <stdlib.h>

4#define LUMP 5

5

6int main(int argc, char **argv) {

7

8 int numP, procID;

9

10 // the usual mpi initialization

11 MPI_Init(&argc, &argv);

12 MPI_Comm_size(MPI_COMM_WORLD, &numP);

13 MPI_Comm_rank(MPI_COMM_WORLD, &procID);

14

15 int *globalData=NULL;

16 int localData[LUMP];

17

18 // process 0 is only 1 that needs global data

19 if (procID == 0) {

20 globalData = malloc(LUMP * numP * sizeof(int) );

21 for (int i=0; i<LUMP*numP; i++)

22 globalData[i] = 0;

23 }

24

25 for (int i=0; i<LUMP; i++)

26 localData[i] = procID*10+i;

27

28 MPI_Gather(localData, LUMP, MPI_INT, globalData, LUMP, MPI_INT, 0, MPI_COMM_WORLD);

29

30 if (procID == 0) {

31 for (int i=0; i<numP*LUMP; i++)

32 printf("%d ", globalData[i]);

33 printf("\n");

34 }

35

36 if (procID == 0)

37 free(globalData);

38

39 MPI_Finalize();

40}

The submit script is as shown below.

1#!/bin/bash

2#--------------------------------------------------------------------

3# Generic SLURM script – MPI Hello World

4#

5# This script requests 1 node and 8 cores/node (out of total 64 avail)

6# for a total of 1*8 = 8 MPI tasks.

7#---------------------------------------------------------------------

8#SBATCH -J myjob

9#SBATCH -o myjob.%j.out

10#SBATCH -e myjob.%j.err

11#SBATCH -p development

12#SBATCH -N 1

13#SBATCH -n 4

14#SBATCH -t 00:02:00

15#SBATCH -A DesignSafe-SimCenter

16

17ibrun ./pi

18

19

Problem 2: Compute the Norm of a vector using MPI¶

Given what you just did with pi can you now write a program to compute the norm of a vector. In the ex2 directory I have placed a file scatterArray.c. This file will use MPI_Scatter to send components of the vector to the different processes in the running parallel application.

1#include <stdio.h>

2#include <stdlib.h>

3#include <mpi.h>

4

5int main(int argc, char** argv) {

6

7 int procID, numP;

8

9 double* globalVector = NULL;

10 double* localVector = NULL;

11

12 MPI_Init(&argc, &argv);

13 MPI_Comm_rank(MPI_COMM_WORLD, &procID);

14 MPI_Comm_size(MPI_COMM_WORLD, &numP);

15

16 if (argc != 2) {

17 printf("Error correct usage: app vectorSize\n");

18 return 0;

19 }

20 int vectorSize = atoi(argv[1]);

21 int remainder = vectorSize % numP;

22

23 // Only the root process initializes the global array

24 if (procID == 0) {

25 globalVector = (double*)malloc(sizeof(double) * vectorSize);

26 srand(50);

27 for (int i = 0; i < vectorSize; i++) {

28 double random_number = 1.0 + (double)rand() / RAND_MAX;

29 globalVector[i] = random_number;

30 }

31 }

32

33 // Determine the size of the local array for each process

34 int localSize = vectorSize / numP;

35

36 // Allocate memory for the local array

37 localVector = (double*)malloc(sizeof(double) * localSize);

38

39 // Scatter the global array to all processes

40 MPI_Scatter(globalVector, localSize, MPI_DOUBLE,

41 localVector, localSize, MPI_DOUBLE,

42 0, MPI_COMM_WORLD);

43

44 // Print the local array for each process

45 printf("Process %d received: ", procID);

46 for (int i = 0; i < localSize; i++) {

47 printf("%.2f ", localVector[i]);

48 }

49 printf("\n");

50

51 // process0 has some stuff in the globalArray that was not sent!

52 if (procID == 0) {

53 printf("Process 0 Additional NOT SENT still in globalVector: ");

54 for (int i=numP*localSize; i<vectorSize; i++)

55 printf("%.2f ", globalVector[i]);

56 printf("\n");

57 }

58

59 // Clean up memory

60 free(globalVector);

61 free(localVector);

62

63 MPI_Finalize();

64 return 0;

65}

Note

The vector size may not always be divisible by the number of processes. In such a case there will be additional terms not sent. Don’t forget to include them in the computation!

Problem 3: Bonus Parallelize your matMul solution using MPI¶

If you want a more complicated problem to parallelize, I suggest parallelizing you matMul application from Day 2.