4.7. E8 - Hurricane Wind¶

Download files |

Hurricane Laura made landfall as a strong Category 4 storm near Cameron, LA in the early hours of 27 August 2020, tying the Last Island Hurricane of 1856 as the strongest land-falling hurricane in Louisiana history. This example presents a wind-induced damage assessment for Lake Charles, LA. Peak wind speed data of Hurricane Laura from Applied Research Associates, Inc. is used as intensity measure, inventory data of about 26,000 wood residential buildings is used along with the rulesets developed to map the inventory to HAZUS-type damage assessment. Final results include the damage and loss estimations along with the building information models based on the rulesets.

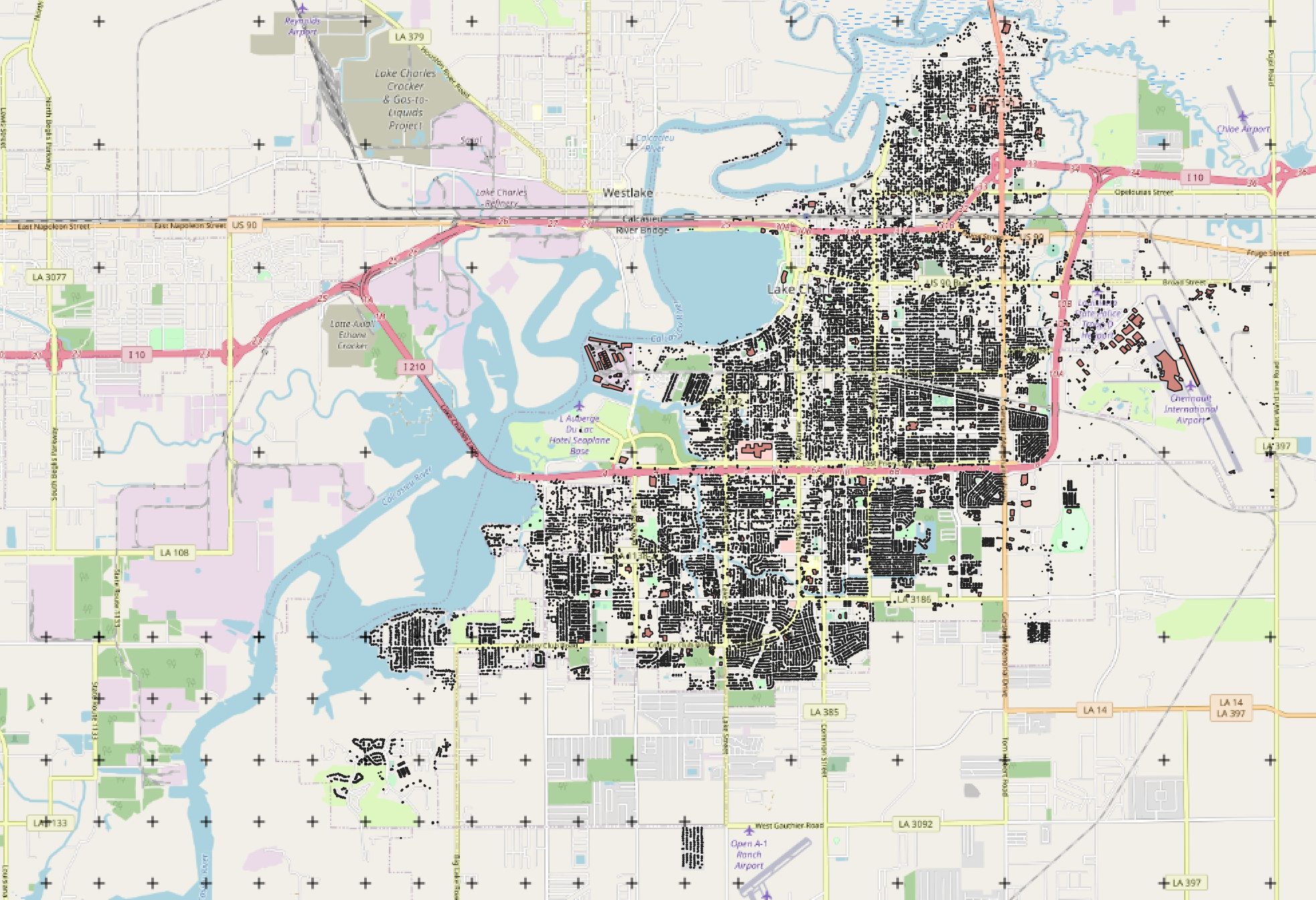

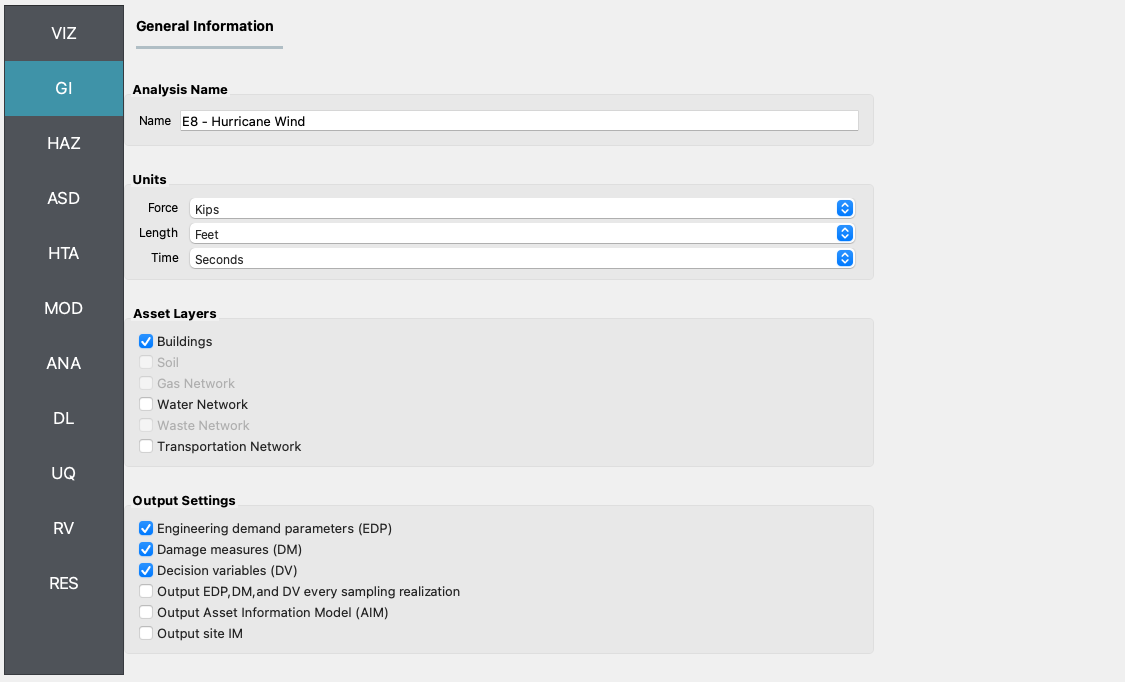

Set the Units in the GI panel as shown in Fig. 4.7.1 and check interested output files.

Fig. 4.7.1 R2D GI setup.¶

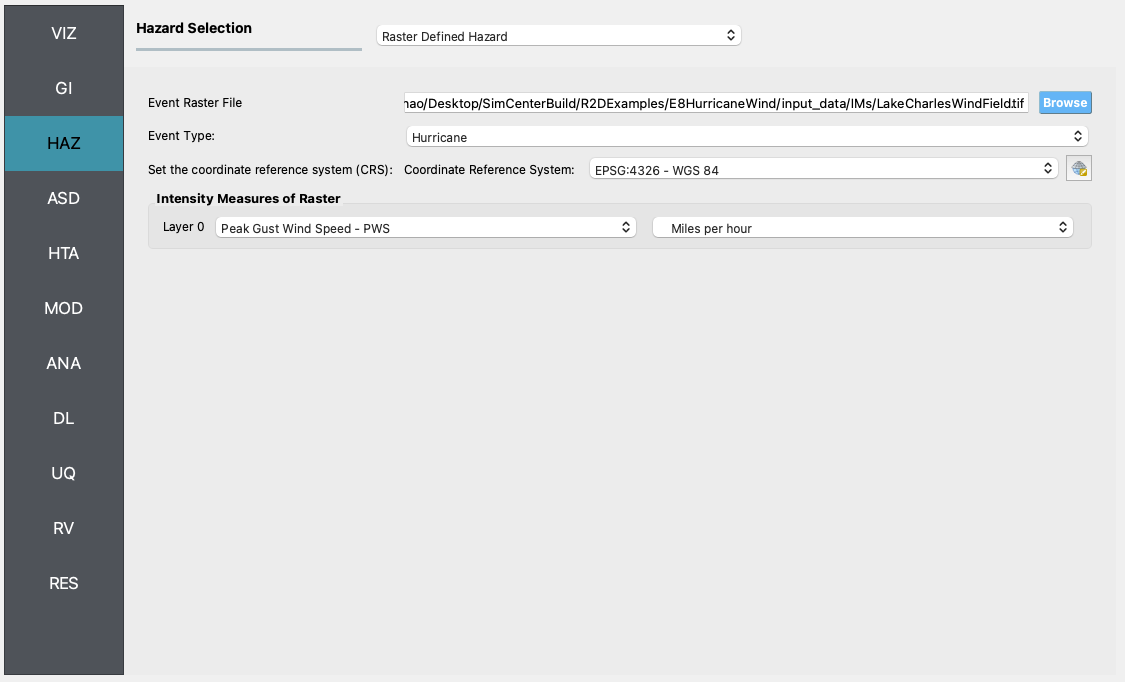

The Raster Defined Hazard option is used to define the peak gust wind speed field in the region.

Fig. 4.7.2 R2D HAZ setup.¶

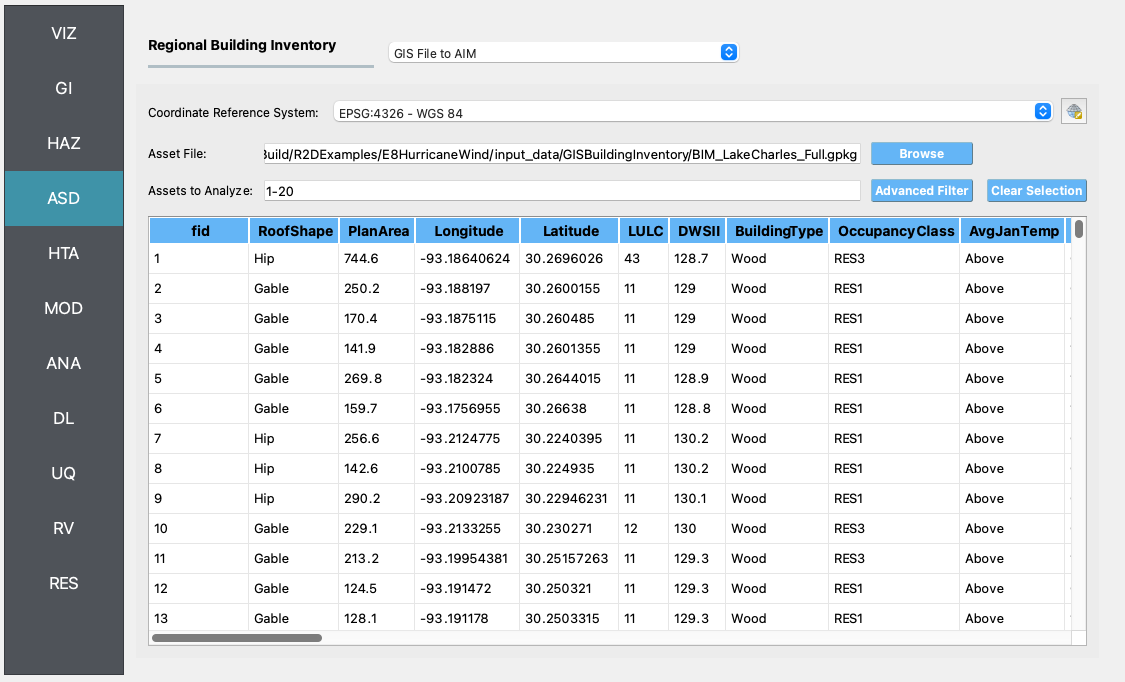

Download the BIM_LakeCharles_Full.csv (under 01. Input: BIM - Building Inventory Data folder). Select CSV to BIM in the ASD panel and set the Import Path to “BIM_LakeCharles_Full.csv” (Fig. 4.7.3). Specify the building IDs that you would like to include in the simulation (e.g., 1-26516 for the entire inventory - note this may take very long time to run on a local machine, so it is suggested to first test with a small sample like 1-100 locally and then submit the entire run to DesignSafe - see more details in Fig. 4.7.10).

Fig. 4.7.3 R2D ASD setup.¶

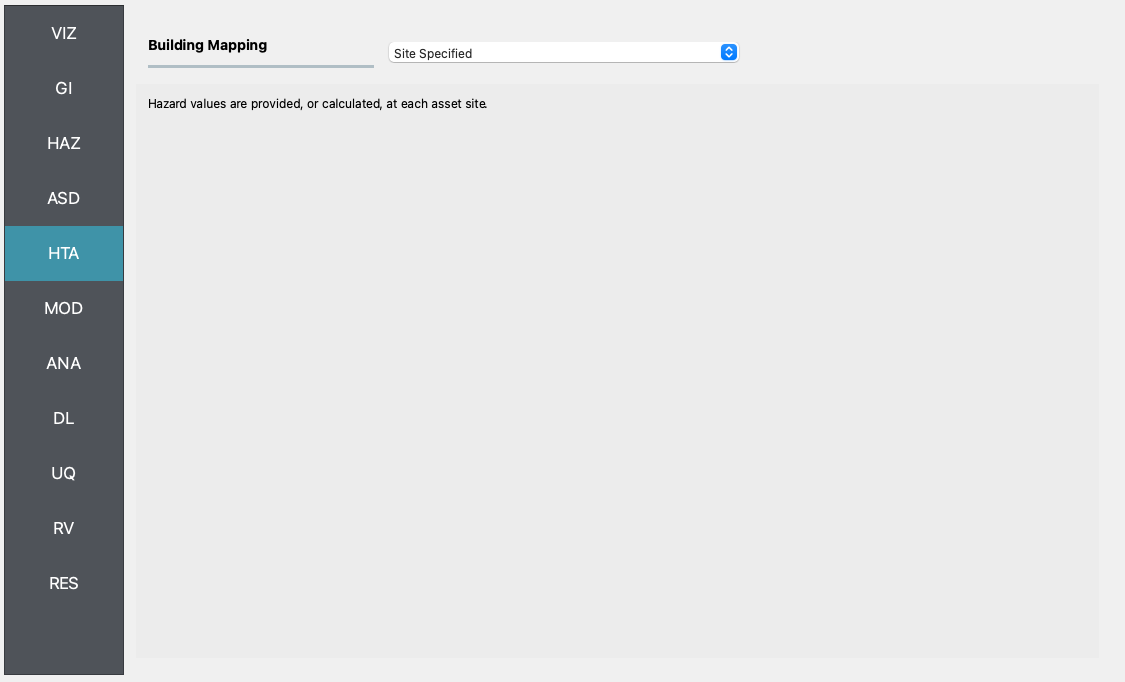

Select the Site Specified option in the HTA panel (e.g., Fig. 4.7.4).

Fig. 4.7.4 R2D HTA setup.¶

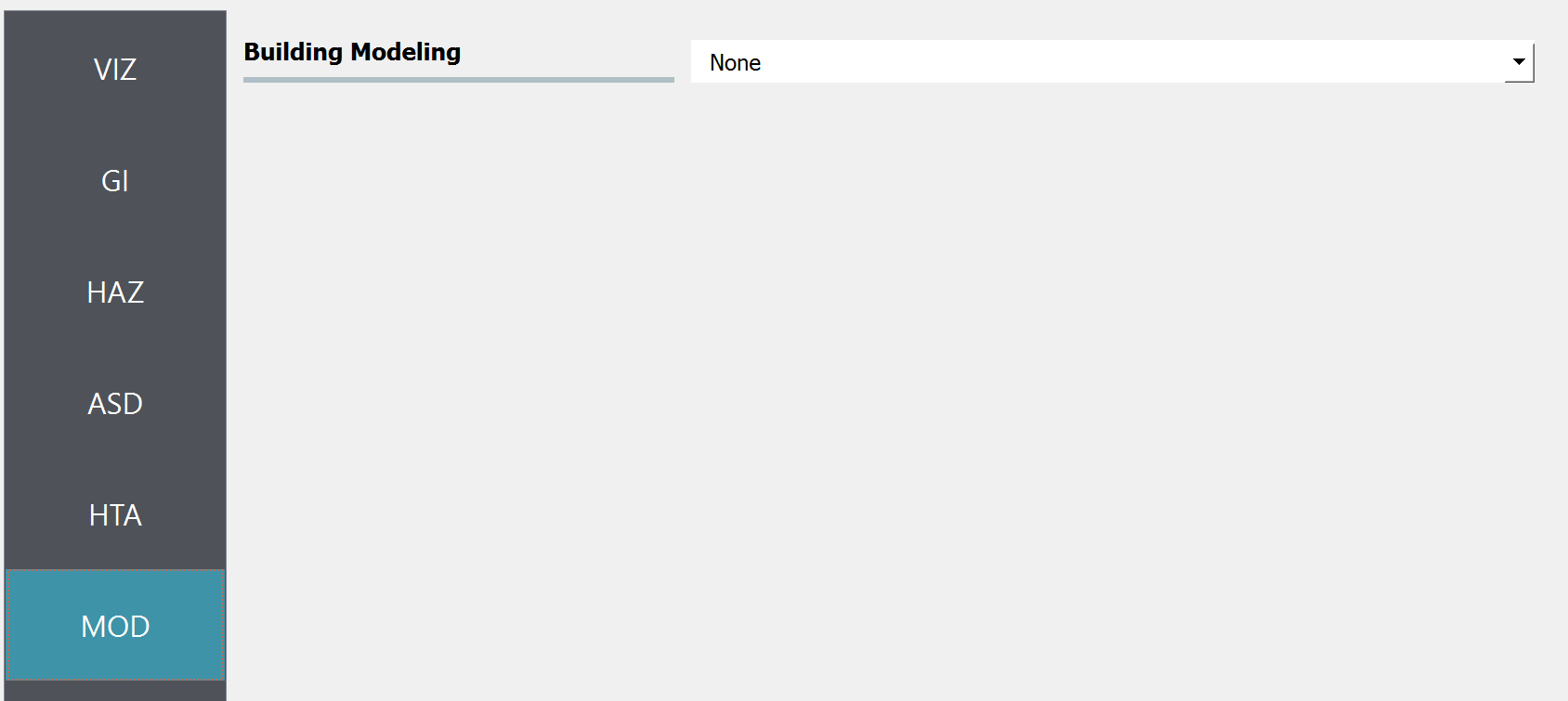

Set the “Building Modeling” in MOD panel to “None”.

Fig. 4.7.5 R2D MOD setup.¶

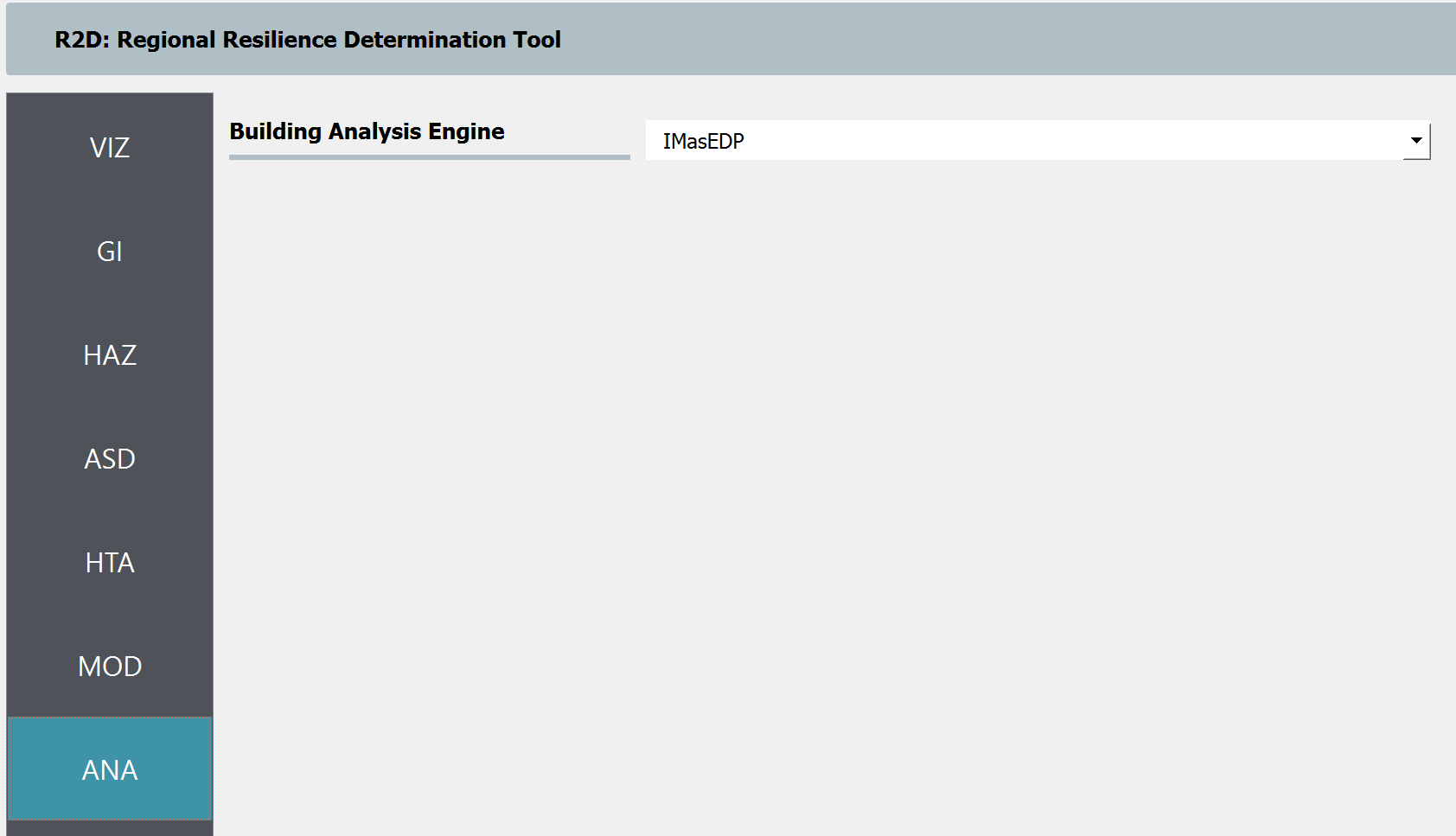

Set the “Building Analysis Engine” in ANA panel to “IMasEDP”.

Fig. 4.7.6 R2D ANA setup.¶

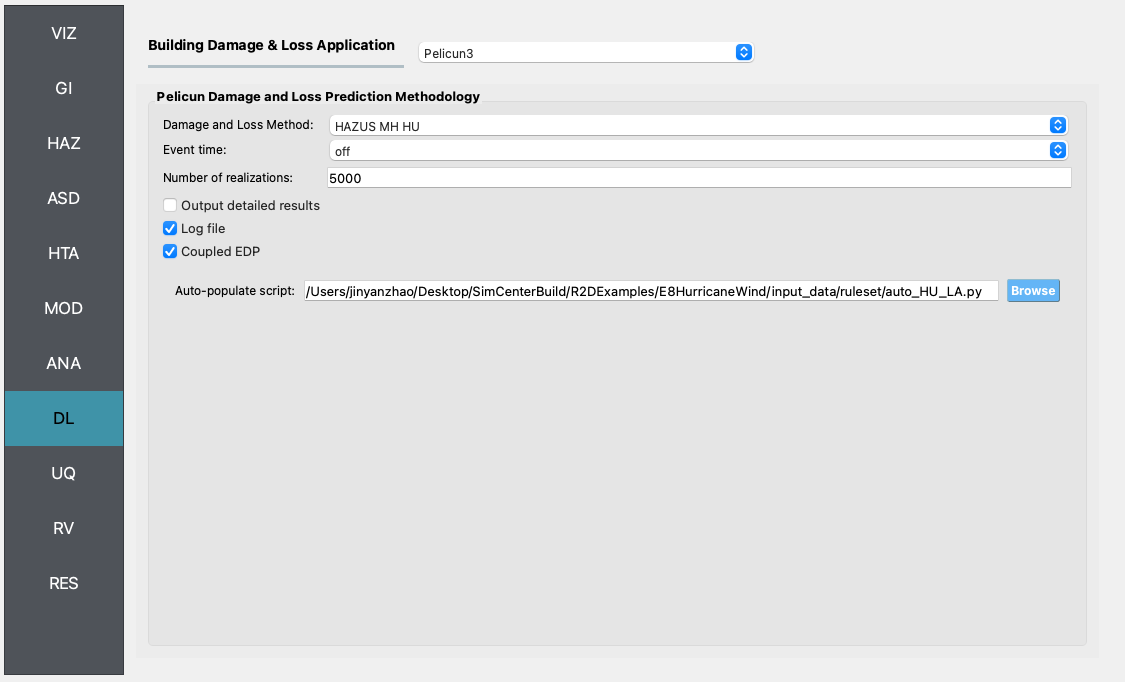

Set the “Damage and Loss Method” in DL panel to “HAZUS MH HU”. Download the ruleset scripts from DesignSafe PRJ-3207 (under 03. Input: DL - Rulesets for Asset Representation/scripts folder) and set the Auto populate script to “auto_HU_LA.py” (Fig. 4.7.7). Note please place the ruleset scripts in an individual folder so that the application could copy and load them later.

Fig. 4.7.7 R2D DL setup.¶

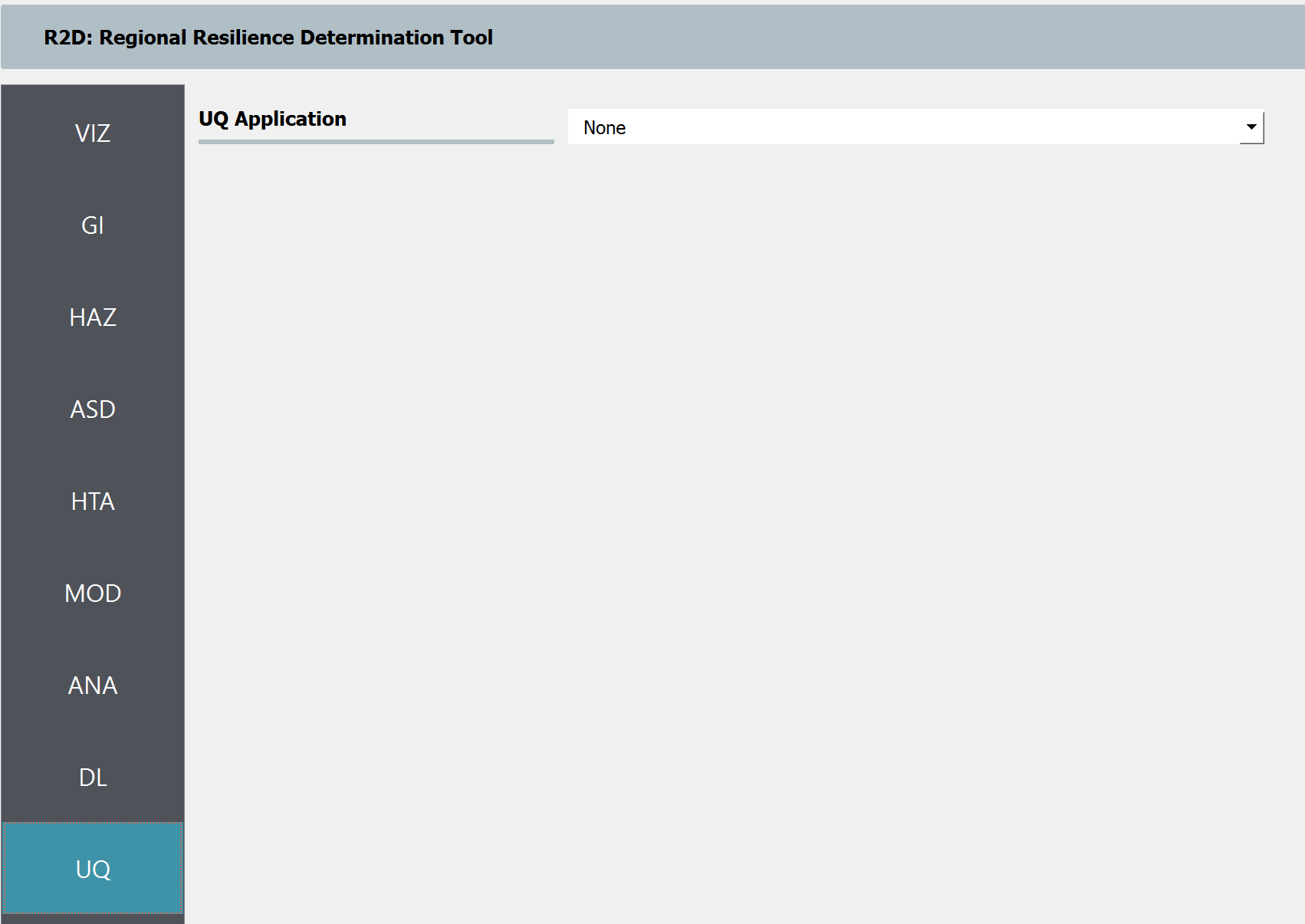

Set the “UQ Application” in UQ panel to “None”.

Fig. 4.7.8 R2D UQ setup.¶

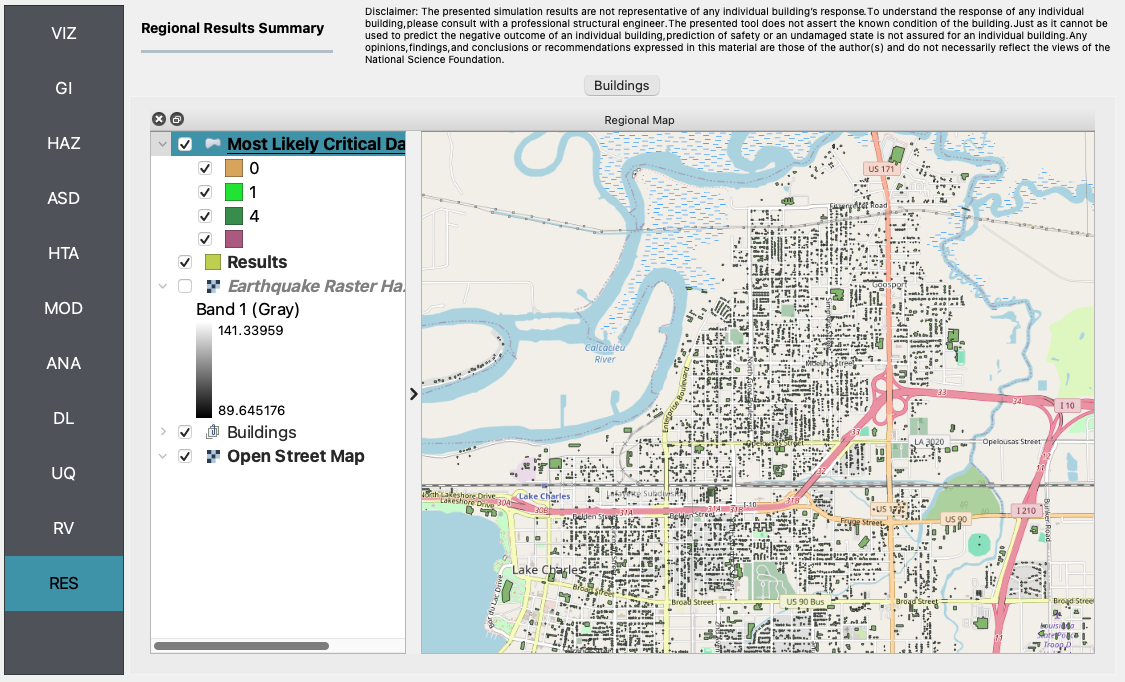

After setting up the simulation, please click the RUN to execute the analysis. Once the simulation is completed, the app would direct you to the RES panel (Fig. 4.7.9) where you could examine and export the results.

Fig. 4.7.9 R2D RES panel.¶

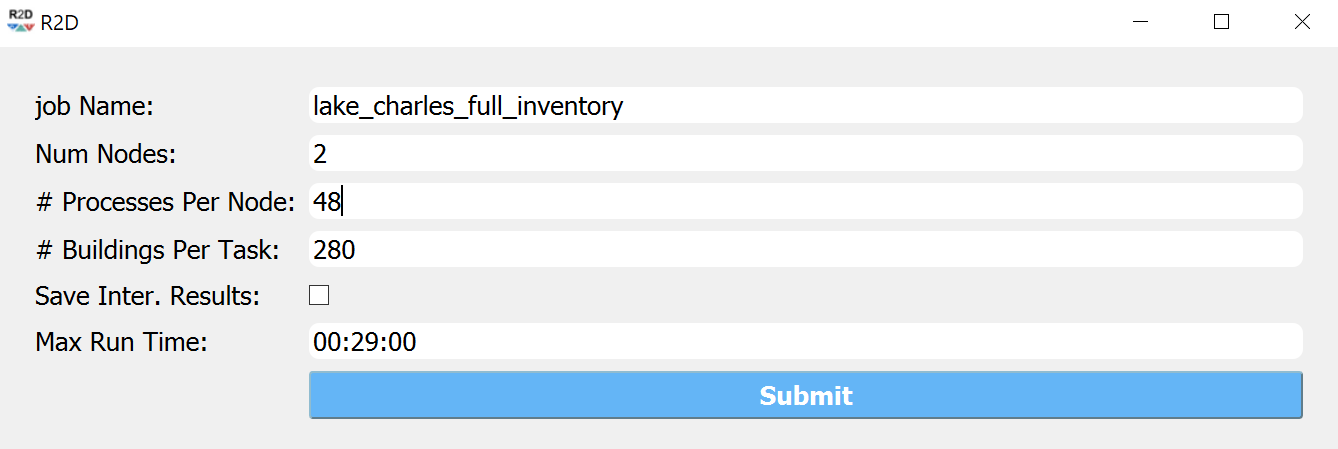

For simulating the damage and loss for a large region of interest (please remember to reset the building IDs in ASD), it would be efficient to submit and run the job to DesignSafe on Frontera. This can be done in R2D by clicking RUN at DesignSafe (one would need to have a valid DesignSafe account for login and access the computing resource). Fig. 4.7.10 provides an example configuration to run the analysis (and please see R2D User Guide for detailed descriptions). The individual building simulations are paralleled when being conducted on Stampede2 which accelerate the process. It is suggested for the entire building inventory in this testbed to use 15 minutes with 96 Skylake (SKX) cores (e.g., 2 nodes with 48 processors per node) to complete the simulation. One would receive a job failure message if the specified CPU hours are not sufficient to complete the run. Note that the product of node number, processor number per node, and buildings per task should be greater than the total number of buildings in the inventory to be analyzed.

Fig. 4.7.10 R2D - Run at DesignSafe (configuration).¶

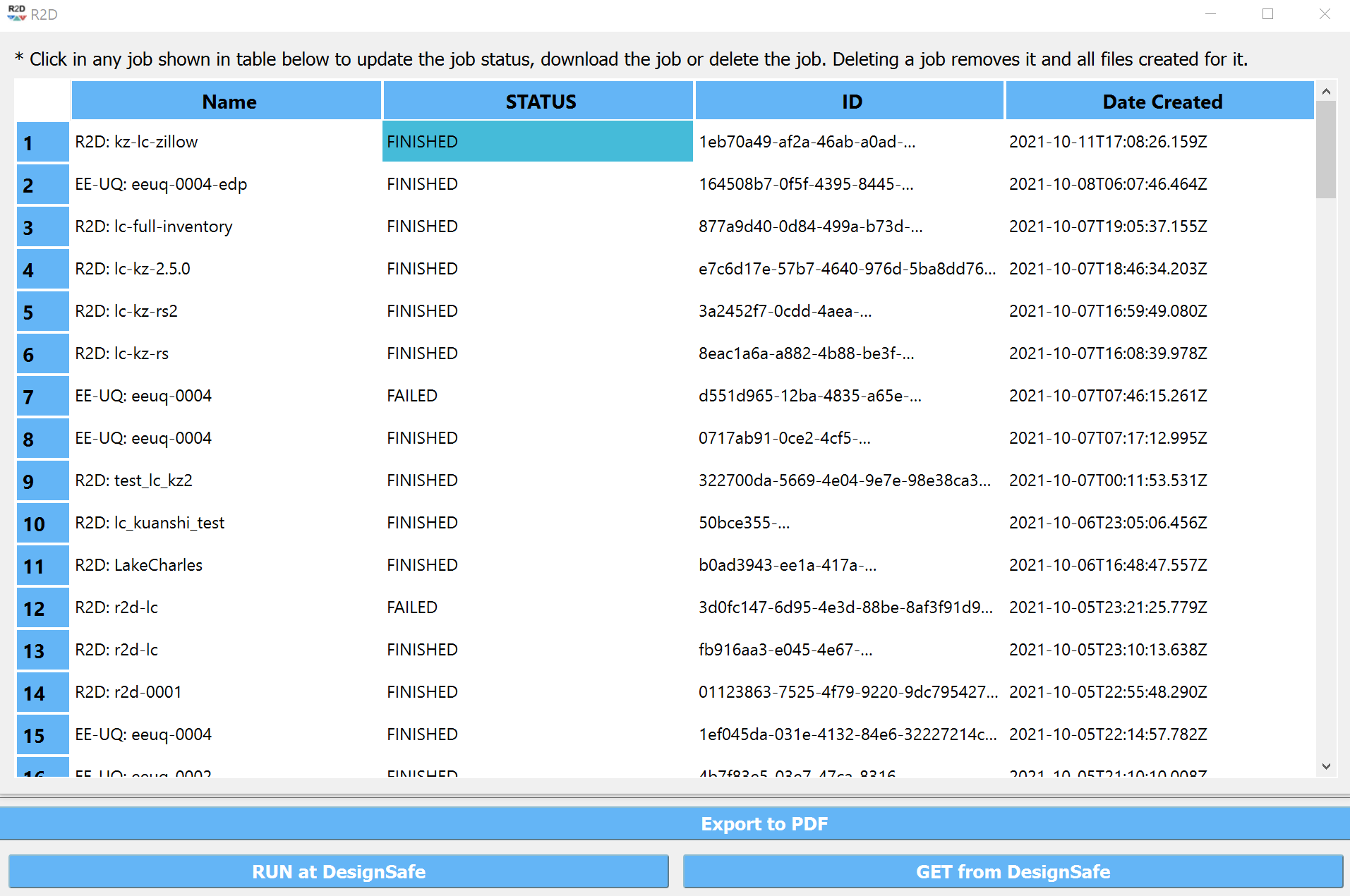

Users could monitor the job status and retrieve result data by GET from DesignSafe button (Fig. 4.7.11). The retrieved data include four major result files, i.e., BIM.hdf, EDP.hdf, DM.hdf, and DV.hdf. R2D also automatically converts the hdf files to csv files that are easier to work with. While R2D provides basic visualization functionalities (Fig. 4.8.9), users could access the data which are downloaded under the remote work directory, e.g., /Documents/R2D/RemoteWorkDir (this directory is machine specific and can be found in File->Preferences->Remote Jobs Directory). Once having these result files, users could extract and process interested information - the next section will use the results from this testbed as an example to discuss more details.

Fig. 4.7.11 R2D GET from DesignSafe.¶